How to Choose the Right AI Agent Development Partner for Your Business

AI Agent Development Partner for Your Business

A practical buyer's guide for businesses evaluating AI agent vendors, what to look for, what to avoid, and what questions to ask before you commit

Somewhere between the boardroom conversations about automation and the first vendor presentation, most business leaders hit the same wall: every company in the room says they build AI agents, but none of them say the same thing when you ask what that actually means.

That confusion is not accidental. AI agent development has become a crowded, loosely defined space where the distance between a genuinely capable partner and a company that learned the vocabulary last quarter can be hard to spot from a proposal alone. The stakes of getting this wrong are high, a poorly built agent can introduce new errors, erode customer trust, and create integration headaches that outlast the original problem you hired someone to solve.

The market for AI agent development services has expanded fast, and quality varies enormously. This guide is written for the decision-makers doing the evaluation operations leads, CTOs, and founders who need a clear framework for separating the partners worth talking to from the ones worth passing on. No fluff. Just the criteria that matter.

First, Be Clear on What You're Actually Building

Before you evaluate a single vendor, you need a working definition of what you need — because 'AI agent' is doing a lot of heavy lifting as a term right now. At its most useful, an AI agent is a system that can perceive inputs, reason over them, take actions, and adjust its behavior based on outcomes — autonomously, within defined boundaries.

That definition covers an enormous range of practical applications: customer support agents that handle complex multi-turn conversations without human handoff, operations agents that monitor systems and trigger workflows based on conditions, research agents that gather, synthesize, and surface information on demand, and sales agents that qualify leads and personalize outreach at scale.

Each of those use cases requires a meaningfully different technical approach, different integration depth, and different success metrics. A vendor that is genuinely strong at conversational customer agents may have very little relevant experience with backend operations automation. Start by being specific about your use case — the more precise you are, the more quickly you will identify whether a vendor has actually solved your problem before.

The most reliable indicator that a vendor understands your use case: they push back on your requirements. A partner who challenges your assumptions and asks hard questions about edge cases is demonstrating domain knowledge. A partner who agrees with everything in Slide 1 is demonstrating eagerness.

The Six Evaluation Criteria That Actually Predict Partner Quality

1. Depth of Technical Stack, Not Breadth of Buzzwords

The most important technical question to ask any prospective partner is not what frameworks they use — it is what choices they make and why. Strong AI agent developers have informed opinions about when to use RAG versus fine-tuning, when agentic frameworks like LangChain or CrewAI are the right tool and when they introduce unnecessary complexity, and how they handle tool-calling reliability, memory architecture, and agent orchestration at scale.

Ask them to walk you through the technical architecture of a recent agent deployment that is similar to your use case. If the answer is fluent, specific, and candid about trade-offs, that is a meaningful signal. If it sounds like a slide deck about AI capabilities in general, move on.

2. Integration Experience with Your Existing Systems

An AI agent that cannot connect to your existing data sources, APIs, and workflows is not an agent — it is a prototype. The firms worth engaging have a track record of building agents that live inside real enterprise environments: CRMs, ERPs, ticketing systems, data warehouses, communication platforms, and legacy infrastructure that was not designed with AI in mind.

Ask specifically: What integrations have they built before? How do they handle authentication and security across system boundaries? What is their approach when a downstream API is unreliable or poorly documented? The answers will tell you far more about real-world readiness than any technical capabilities slide.

3. Proven Delivery Across Markets and Industries

Geography and market exposure matter more than many buyers realize. A firm that has only deployed agents in one regulatory environment may be genuinely unprepared for the compliance requirements, data residency rules, and enterprise procurement expectations of your market.

Dextra Labs, for instance, operates as a custom AI agent development company across Singapore, the United States, the United Kingdom, and India — markets with meaningfully different enterprise expectations, compliance landscapes, and integration ecosystems. That kind of multi-market delivery track record is a practical signal that a firm has built agents for varied, real-world conditions, not just controlled pilots.

When reviewing any firm's portfolio, look for case studies that name the industry, describe the integration complexity, and include measurable outcomes. A vague reference to 'a Fortune 500 client in financial services' tells you very little. A case study that explains what the agent did, what it connected to, and what changed after deployment tells you everything.

4. Security and Governance Architecture

AI agents that operate autonomously — especially those with access to sensitive data or the ability to trigger real-world actions — introduce security and governance requirements that many early-stage vendors have not fully worked through. Before engaging any partner, understand their approach to agent permissions and scope boundaries, audit logging and explainability, human-in-the-loop override mechanisms, data handling and residency, and compliance with relevant frameworks for your industry.

This is not theoretical risk management. An agent with misconfigured permissions that accesses data it should not, or triggers an action based on a misunderstood input, creates liability that lands squarely on your organization. The partner you choose should be thinking about these constraints from the architecture stage, not adding them as an afterthought before go-live.

5. Post-Deployment Support and Iteration Commitment

AI agents degrade. Models update, data distributions shift, user behavior evolves, and edge cases emerge that no pre-deployment testing anticipated. The question is not whether your agent will need adjustment after launch — it will. The question is whether your partner will be there when it does, or whether the engagement ends at delivery.

Look for partners who offer structured post-deployment monitoring, defined SLAs for production issues, a retraining and fine-tuning roadmap as new data accumulates, and a clear process for incorporating user feedback into agent improvement. Vendors who treat deployment as the finish line have misunderstood what production AI actually requires.

6. Transparent Pricing Without Hidden Scale Costs

Pricing models for AI agent development vary significantly, and the differences matter more than most buyers realize at the proposal stage. The three most common structures are:

- Fixed-scope project pricing: A defined deliverable for a defined cost. Works well when requirements are clear and bounded. Risk: scope creep and change orders if your needs evolve during build.

- Time-and-materials retainer: Flexible and adaptable, but requires active scope management on your end. Best for exploratory builds or ongoing iteration partnerships.

- Outcome-based or consumption pricing: Increasingly common for agent deployments where usage is variable. Understand the unit economics carefully — inference costs at scale can significantly change the total cost of ownership.

Beyond the headline pricing, ask about infrastructure cost pass-through, model API costs, costs for additional user seats or data connectors, and what a 3x or 5x growth in agent usage would cost. The vendors who can answer those questions clearly are the ones who have built agents at scale before.

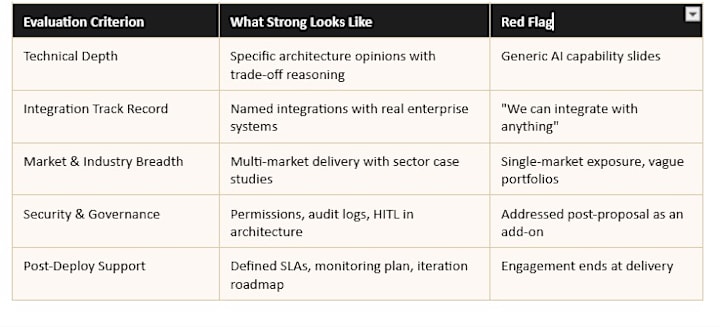

Quick-Reference Evaluation Scorecard

Use this framework to score vendors across the criteria that matter most for your specific situation:

Questions to Ask in Every Vendor Conversation

A good vendor conversation is less pitch, more problem-solving session. If the partner is doing most of the talking, you are in a sales meeting, not a discovery meeting. Come prepared with questions that require specifics:

- Walk me through a recent agent deployment that did not go as planned. What happened, and how did you recover?

- How do you handle agent hallucinations or confident incorrect outputs in a production environment? Show me an example of your mitigation approach.

- What is your handoff protocol when an agent reaches the boundary of its competence and a human needs to take over?

- How do you evaluate agent performance over time, and what does your iteration process look like after the first 90 days of production?

- What would you build differently if you were starting this type of engagement over again today?

The vendors who engage with those questions thoughtfully — who have real stories, real failure modes, and real lessons — are the ones who will build something that survives contact with your real users.

The Decision Is Not Just Technical

Choosing an AI agent development partner is ultimately a working relationship decision as much as a technical one. You are selecting a team that will be embedded in your business problems, your data, and your user experience for months — possibly years. The partner who wins on paper may not be the partner your team can actually build with.

If you are still at the stage of mapping out your AI strategy before committing to a build partner, working with an AI consulting company that has real implementation experience — rather than a pure strategy advisory firm — will give you a far more grounded view of what is buildable within your budget and timeline. Strategy without delivery experience produces roadmaps that look compelling in a deck and stall in execution.

After the evaluation criteria are met and the shortlist is down to two or three credible options, the final filter is often simpler: Which team asks the best questions? Which team is honest about what they do not know? Which team treats your constraints as design inputs rather than inconveniences?

Those signals are what separate partners who deliver agents that work in the real world from those who deliver agents that demo well in a controlled environment. Take your time with the evaluation. What you see in the selection process is exactly what you will get in the build.

About the Creator

Gulshan

SEO Services , Guest Post & Content Writter.

Comments

There are no comments for this story

Be the first to respond and start the conversation.